Content from Before we Start

Last updated on 2024-05-14 | Edit this page

Estimated time: 40 minutes

Overview

Questions

- How to find your way around RStudio?

- How to interact with R?

- How to manage your environment?

- How to install packages?

Objectives

- Install latest version of R.

- Install latest version of RStudio.

- Navigate the RStudio GUI.

- Install additional packages using the packages tab.

- Install additional packages using R code.

What is R? What is RStudio?

The term “R” is used to refer to both the programming

language and the software that interprets the scripts written using

it.

RStudio is currently a very popular way to not only write your R scripts but also to interact with the R software. To function correctly, RStudio needs R and therefore both need to be installed on your computer.

To make it easier to interact with R, we will use RStudio. RStudio is the most popular IDE (Integrated Development Environment) for R. An IDE is a piece of software that provides tools to make programming easier.

You can also use the R Presentations feature to present your work in an HTML5 presentation mixing Markdown and R code. You can display these within R Studio or your browser. There are many options for customising your presentation slides, including an option for showing LaTeX equations. This can help you collaborate with others and also has an application in teaching and classroom use.

Why learn R?

R does not involve lots of pointing and clicking, and that’s a good thing

The learning curve might be steeper than with other software but with R, the results of your analysis do not rely on remembering a succession of pointing and clicking, but instead on a series of written commands, and that’s a good thing! So, if you want to redo your analysis because you collected more data, you don’t have to remember which button you clicked in which order to obtain your results; you just have to run your script again.

Working with scripts makes the steps you used in your analysis clear, and the code you write can be inspected by someone else who can give you feedback and spot mistakes.

Working with scripts forces you to have a deeper understanding of what you are doing, and facilitates your learning and comprehension of the methods you use.

R code is great for reproducibility

Reproducibility is when someone else (including your future self) can obtain the same results from the same dataset when using the same analysis.

R integrates with other tools to generate manuscripts from your code. If you collect more data, or fix a mistake in your dataset, the figures and the statistical tests in your manuscript are updated automatically.

An increasing number of journals and funding agencies expect analyses to be reproducible, so knowing R will give you an edge with these requirements.

To further support reproducibility and transparency, there are also packages that help you with dependency management: keeping track of which packages we are loading and how they depend on the package version you are using. This helps you make sure existing workflows work consistently and continue doing what they did before.

Packages like renv let you “save” and “load” the state of your project library, also keeping track of the package version you use and the source it can be retrieved from.

R is interdisciplinary and extensible

With 10,000+ packages that can be installed to extend its capabilities, R provides a framework that allows you to combine statistical approaches from many scientific disciplines to best suit the analytical framework you need to analyze your data. For instance, R has packages for image analysis, GIS, time series, population genetics, and a lot more.

R works on data of all shapes and sizes

The skills you learn with R scale easily with the size of your dataset. Whether your dataset has hundreds or millions of lines, it won’t make much difference to you.

R is designed for data analysis. It comes with special data structures and data types that make handling of missing data and statistical factors convenient.

R can connect to spreadsheets, databases, and many other data formats, on your computer or on the web.

R produces high-quality graphics

The plotting functionalities in R are endless, and allow you to adjust any aspect of your graph to convey most effectively the message from your data.

R has a large and welcoming community

Thousands of people use R daily. Many of them are willing to help you through mailing lists and websites such as Stack Overflow, or on the RStudio community. Questions which are backed up with short, reproducible code snippets are more likely to attract knowledgeable responses.

Not only is R free, but it is also open-source and cross-platform

Anyone can inspect the source code to see how R works. Because of this transparency, there is less chance for mistakes, and if you (or someone else) find some, you can report and fix bugs.

Because R is open source and is supported by a large community of developers and users, there is a very large selection of third-party add-on packages which are freely available to extend R’s native capabilities.

RStudio extends what R can do, and makes it easier to write R code and interact with R. Left photo credit; Right photo credit.

A tour of RStudio

Knowing your way around RStudio

Let’s start by learning about RStudio, which is an Integrated Development Environment (IDE) for working with R.

The RStudio IDE open-source product is free under the Affero General Public License (AGPL) v3. The RStudio IDE is also available with a commercial license and priority email support from RStudio, Inc.

We will use the RStudio IDE to write code, navigate the files on our computer, inspect the variables we create, and visualize the plots we generate. RStudio can also be used for other things (e.g., version control, developing packages, writing Shiny apps) that we will not cover during the workshop.

One of the advantages of using RStudio is that all the information you need to write code is available in a single window. Additionally, RStudio provides many shortcuts, autocompletion, and highlighting for the major file types you use while developing in R. RStudio makes typing easier and less error-prone.

Getting set up

It is good practice to keep a set of related data, analyses, and text self-contained in a single folder called the working directory. All of the scripts within this folder can then use relative paths to files. Relative paths indicate where inside the project a file is located (as opposed to absolute paths, which point to where a file is on a specific computer). Working this way makes it a lot easier to move your project around on your computer and share it with others without having to directly modify file paths in the individual scripts.

RStudio provides a helpful set of tools to do this through its “Projects” interface, which not only creates a working directory for you but also remembers its location (allowing you to quickly navigate to it). The interface also (optionally) preserves custom settings and open files to make it easier to resume work after a break.

Create a new project

- Under the

Filemenu, click onNew project, chooseNew directory, thenNew project - Enter a name for this new folder (or “directory”) and choose a

convenient location for it. This will be your working

directory for the rest of the day (e.g.,

~/data-carpentry) - Click on

Create project - Create a new file where we will type our scripts. Go to File >

New File > R script. Click the save icon on your toolbar and save

your script as “

script.R”.

The simplest way to open an RStudio project once it has been created

is to navigate through your files to where the project was saved and

double click on the .Rproj (blue cube) file. This will open

RStudio and start your R session in the same directory

as the .Rproj file. All your data, plots and scripts will

now be relative to the project directory. RStudio projects have the

added benefit of allowing you to open multiple projects at the same time

each open to its own project directory. This allows you to keep multiple

projects open without them interfering with each other.

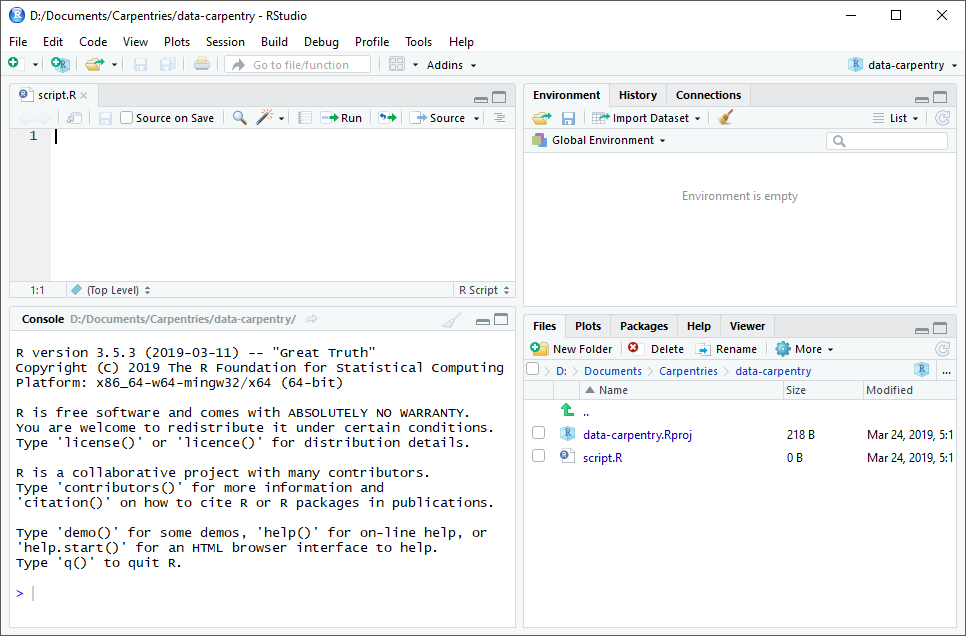

The RStudio Interface

Let’s take a quick tour of RStudio.

RStudio is divided into four “panes”. The placement of these panes and their content can be customized (see menu, Tools -> Global Options -> Pane Layout).

The Default Layout is:

- Top Left - Source: your scripts and documents

- Bottom Left - Console: what R would look and be like without RStudio

- Top Right - Environment/History: look here to see what you have done

- Bottom Right - Files and more: see the contents of the project/working directory here, like your Script.R file

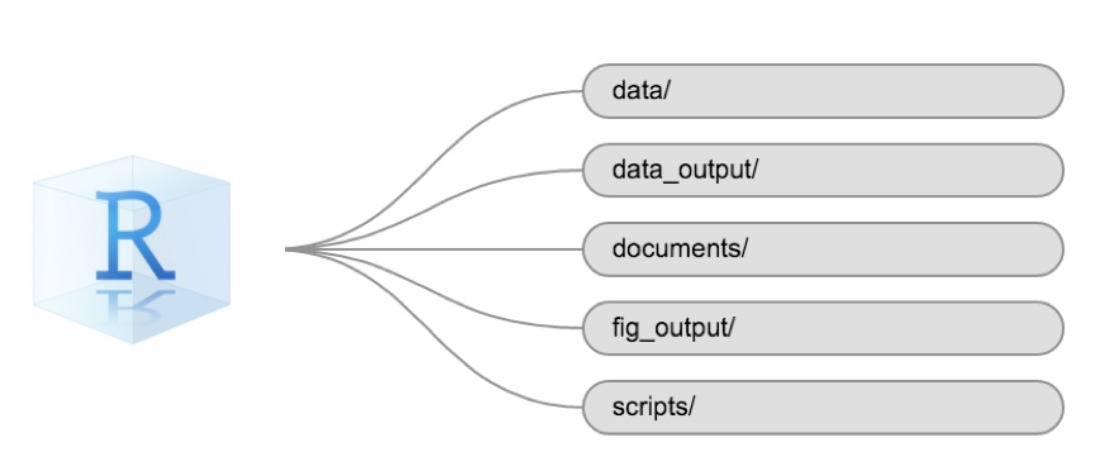

Organizing your working directory

Using a consistent folder structure across your projects will help keep things organized and make it easy to find/file things in the future. This can be especially helpful when you have multiple projects. In general, you might create directories (folders) for scripts, data, and documents. Here are some examples of suggested directories:

-

data/Use this folder to store your raw data and intermediate datasets. For the sake of transparency and provenance, you should always keep a copy of your raw data accessible and do as much of your data cleanup and preprocessing programmatically (i.e., with scripts, rather than manually) as possible. -

data_output/When you need to modify your raw data, it might be useful to store the modified versions of the datasets in a different folder. -

documents/Used for outlines, drafts, and other text. -

fig_output/This folder can store the graphics that are generated by your scripts. -

scripts/A place to keep your R scripts for different analyses or plotting.

You may want additional directories or subdirectories depending on your project needs, but these should form the backbone of your working directory.

The working directory

The working directory is an important concept to understand. It is the place where R will look for and save files. When you write code for your project, your scripts should refer to files in relation to the root of your working directory and only to files within this structure.

Using RStudio projects makes this easy and ensures that your working

directory is set up properly. If you need to check it, you can use

getwd(). If for some reason your working directory is not

the same as the location of your RStudio project, it is likely that you

opened an R script or RMarkdown file not your

.Rproj file. You should close out of RStudio and open the

.Rproj file by double clicking on the blue cube! If you

ever need to modify your working directory in a script,

setwd('my/path') changes the working directory. This should

be used with caution since it makes analyses hard to share across

devices and with other users.

Downloading the data and getting set up

For this lesson we will use the following folders in our working

directory: data/,

data_output/ and

fig_output/. Let’s write them all in

lowercase to be consistent. We can create them using the RStudio

interface by clicking on the “New Folder” button in the file pane

(bottom right), or directly from R by typing at console:

R

dir.create("data")

dir.create("data_output")

dir.create("fig_output")

You can either download the data used for this lesson from GitHub or

with R. You can copy the data from this GitHub

link and paste it into a file called SAFI_clean.csv in

the data/ directory you just created. Or you can do this

directly from R by copying and pasting this in your terminal (your

instructor can place this chunk of code in the Etherpad):

R

download.file(

"https://raw.githubusercontent.com/datacarpentry/r-socialsci/main/episodes/data/SAFI_clean.csv",

"data/SAFI_clean.csv", mode = "wb"

)

Interacting with R

The basis of programming is that we write down instructions for the computer to follow, and then we tell the computer to follow those instructions. We write, or code, instructions in R because it is a common language that both the computer and we can understand. We call the instructions commands and we tell the computer to follow the instructions by executing (also called running) those commands.

There are two main ways of interacting with R: by using the console or by using script files (plain text files that contain your code). The console pane (in RStudio, the bottom left panel) is the place where commands written in the R language can be typed and executed immediately by the computer. It is also where the results will be shown for commands that have been executed. You can type commands directly into the console and press Enter to execute those commands, but they will be forgotten when you close the session.

Because we want our code and workflow to be reproducible, it is better to type the commands we want in the script editor and save the script. This way, there is a complete record of what we did, and anyone (including our future selves!) can easily replicate the results on their computer.

RStudio allows you to execute commands directly from the script editor by using the Ctrl + Enter shortcut (on Mac, Cmd + Return will work). The command on the current line in the script (indicated by the cursor) or all of the commands in selected text will be sent to the console and executed when you press Ctrl + Enter. If there is information in the console you do not need anymore, you can clear it with Ctrl + L. You can find other keyboard shortcuts in this RStudio cheatsheet about the RStudio IDE.

At some point in your analysis, you may want to check the content of a variable or the structure of an object without necessarily keeping a record of it in your script. You can type these commands and execute them directly in the console. RStudio provides the Ctrl + 1 and Ctrl + 2 shortcuts allow you to jump between the script and the console panes.

If R is ready to accept commands, the R console shows a

> prompt. If R receives a command (by typing,

copy-pasting, or sent from the script editor using Ctrl +

Enter), R will try to execute it and, when ready, will show

the results and come back with a new > prompt to wait

for new commands.

If R is still waiting for you to enter more text, the console will

show a + prompt. It means that you haven’t finished

entering a complete command. This is likely because you have not

‘closed’ a parenthesis or quotation, i.e. you don’t have the same number

of left-parentheses as right-parentheses or the same number of opening

and closing quotation marks. When this happens, and you thought you

finished typing your command, click inside the console window and press

Esc; this will cancel the incomplete command and return you

to the > prompt. You can then proofread the command(s)

you entered and correct the error.

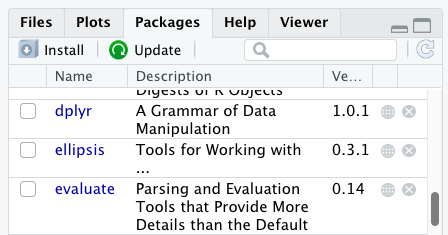

Installing additional packages using the packages tab

In addition to the core R installation, there are in excess of 10,000 additional packages which can be used to extend the functionality of R. Many of these have been written by R users and have been made available in central repositories, like the one hosted at CRAN, for anyone to download and install into their own R environment. You should have already installed the packages ‘ggplot2’ and ’dplyr. If you have not, please do so now using these instructions.

You can see if you have a package installed by looking in the

packages tab (on the lower-right by default). You can also

type the command installed.packages() into the console and

examine the output.

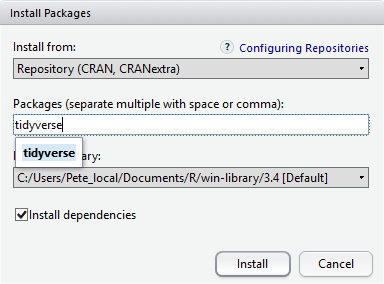

Additional packages can be installed from the ‘packages’ tab. On the packages tab, click the ‘Install’ icon and start typing the name of the package you want in the text box. As you type, packages matching your starting characters will be displayed in a drop-down list so that you can select them.

At the bottom of the Install Packages window is a check box to ‘Install’ dependencies. This is ticked by default, which is usually what you want. Packages can (and do) make use of functionality built into other packages, so for the functionality contained in the package you are installing to work properly, there may be other packages which have to be installed with them. The ‘Install dependencies’ option makes sure that this happens.

Scroll through packages tab down to ‘tidyverse’. You can also type a few characters into the searchbox. The ‘tidyverse’ package is really a package of packages, including ‘ggplot2’ and ‘dplyr’, both of which require other packages to run correctly. All of these packages will be installed automatically. Depending on what packages have previously been installed in your R environment, the install of ‘tidyverse’ could be very quick or could take several minutes. As the install proceeds, messages relating to its progress will be written to the console. You will be able to see all of the packages which are actually being installed.

Because the install process accesses the CRAN repository, you will need an Internet connection to install packages.

It is also possible to install packages from other repositories, as well as Github or the local file system, but we won’t be looking at these options in this lesson.

Installing additional packages using R code

If you were watching the console window when you started the install of ‘tidyverse’, you may have noticed that the line

R

install.packages("tidyverse")

was written to the console before the start of the installation messages.

You could also have installed the

tidyverse packages by running this command

directly at the R terminal.

We will be using another package called

here throughout the workshop to manage

paths and directories. We will discuss it more detail in a later

episode, but we will install it now in the console:

R

install.packages("here")

Content from Introduction to R

Last updated on 2024-05-14 | Edit this page

Estimated time: 80 minutes

Overview

Questions

- What data types are available in R?

- What is an object?

- How can values be initially assigned to variables of different data types?

- What arithmetic and logical operators can be used?

- How can subsets be extracted from vectors?

- How does R treat missing values?

- How can we deal with missing values in R?

Objectives

- Define the following terms as they relate to R: object, assign, call, function, arguments, options.

- Assign values to objects in R.

- Learn how to name objects.

- Use comments to inform script.

- Solve simple arithmetic operations in R.

- Call functions and use arguments to change their default options.

- Inspect the content of vectors and manipulate their content.

- Subset values from vectors.

- Analyze vectors with missing data.

Creating objects in R

You can get output from R simply by typing math in the console:

R

3 + 5

OUTPUT

[1] 8R

12 / 7

OUTPUT

[1] 1.714286However, to do useful and interesting things, we need to assign

values to objects. To create an object, we need to

give it a name followed by the assignment operator <-,

and the value we want to give it:

R

area_hectares <- 1.0

<- is the assignment operator. It assigns values on

the right to objects on the left. So, after executing

x <- 3, the value of x is 3.

The arrow can be read as 3 goes into x.

For historical reasons, you can also use = for assignments,

but not in every context. Because of the slight

differences in syntax, it is good practice to always use

<- for assignments. More generally we prefer the

<- syntax over = because it makes it clear

what direction the assignment is operating (left assignment), and it

increases the read-ability of the code.

In RStudio, typing Alt + - (push Alt

at the same time as the - key) will write <-

in a single keystroke in a PC, while typing Option +

- (push Option at the same time as the

- key) does the same in a Mac.

Objects can be given any name such as x,

current_temperature, or subject_id. You want

your object names to be explicit and not too long. They cannot start

with a number (2x is not valid, but x2 is). R

is case sensitive (e.g., age is different from

Age). There are some names that cannot be used because they

are the names of fundamental objects in R (e.g., if,

else, for, see here

for a complete list). In general, even if it’s allowed, it’s best to not

use them (e.g., c, T, mean,

data, df, weights). If in doubt,

check the help to see if the name is already in use. It’s also best to

avoid dots (.) within an object name as in

my.dataset. There are many objects in R with dots in their

names for historical reasons, but because dots have a special meaning in

R (for methods) and other programming languages, it’s best to avoid

them. The recommended writing style is called snake_case, which implies

using only lowercaseletters and numbers and separating each word with

underscores (e.g., animals_weight, average_income). It is also

recommended to use nouns for object names, and verbs for function names.

It’s important to be consistent in the styling of your code (where you

put spaces, how you name objects, etc.). Using a consistent coding style

makes your code clearer to read for your future self and your

collaborators. In R, three popular style guides are Google’s, Jean Fan’s and the tidyverse’s. The tidyverse’s is

very comprehensive and may seem overwhelming at first. You can install

the lintr

package to automatically check for issues in the styling of your

code.

Objects vs. variables

What are known as objects in R are known as

variables in many other programming languages. Depending on

the context, object and variable can have

drastically different meanings. However, in this lesson, the two words

are used synonymously. For more information see: https://cran.r-project.org/doc/manuals/r-release/R-lang.html#Objects

When assigning a value to an object, R does not print anything. You can force R to print the value by using parentheses or by typing the object name:

R

area_hectares <- 1.0 # doesn't print anything

(area_hectares <- 1.0) # putting parenthesis around the call prints the value of `area_hectares`

OUTPUT

[1] 1R

area_hectares # and so does typing the name of the object

OUTPUT

[1] 1Now that R has area_hectares in memory, we can do

arithmetic with it. For instance, we may want to convert this area into

acres (area in acres is 2.47 times the area in hectares):

R

2.47 * area_hectares

OUTPUT

[1] 2.47We can also change an object’s value by assigning it a new one:

R

area_hectares <- 2.5

2.47 * area_hectares

OUTPUT

[1] 6.175This means that assigning a value to one object does not change the

values of other objects. For example, let’s store the plot’s area in

acres in a new object, area_acres:

R

area_acres <- 2.47 * area_hectares

and then change area_hectares to 50.

R

area_hectares <- 50

The value of area_acres is still 6.175 because you have

not re-run the line area_acres <- 2.47 * area_hectares

since changing the value of area_hectares.

Vectors and data types

A vector is the most common and basic data type in R, and is pretty

much the workhorse of R. A vector is composed by a series of values,

which can be either numbers or characters. We can assign a series of

values to a vector using the c() function. For example we

can create a vector of the number of household members for the

households we’ve interviewed and assign it to a new object

hh_members:

R

hh_members <- c(3, 7, 10, 6)

hh_members

OUTPUT

[1] 3 7 10 6A vector can also contain characters. For example, we can have a

vector of the building material used to construct our interview

respondents’ walls (respondent_wall_type):

R

respondent_wall_type <- c("muddaub", "burntbricks", "sunbricks")

respondent_wall_type

OUTPUT

[1] "muddaub" "burntbricks" "sunbricks" The quotes around “muddaub”, etc. are essential here. Without the

quotes R will assume there are objects called muddaub,

burntbricks and sunbricks. As these objects

don’t exist in R’s memory, there will be an error message.

There are many functions that allow you to inspect the content of a

vector. length() tells you how many elements are in a

particular vector:

R

length(hh_members)

OUTPUT

[1] 4R

length(respondent_wall_type)

OUTPUT

[1] 3An important feature of a vector, is that all of the elements are the

same type of data. The function typeof() indicates the type

of an object:

R

typeof(hh_members)

OUTPUT

[1] "double"R

typeof(respondent_wall_type)

OUTPUT

[1] "character"The function str() provides an overview of the structure

of an object and its elements. It is a useful function when working with

large and complex objects:

R

str(hh_members)

OUTPUT

num [1:4] 3 7 10 6R

str(respondent_wall_type)

OUTPUT

chr [1:3] "muddaub" "burntbricks" "sunbricks"You can use the c() function to add other elements to

your vector:

R

possessions <- c("bicycle", "radio", "television")

possessions <- c(possessions, "mobile_phone") # add to the end of the vector

possessions <- c("car", possessions) # add to the beginning of the vector

possessions

OUTPUT

[1] "car" "bicycle" "radio" "television" "mobile_phone"In the first line, we take the original vector

possessions, add the value "mobile_phone" to

the end of it, and save the result back into possessions.

Then we add the value "car" to the beginning, again saving

the result back into possessions.

We can do this over and over again to grow a vector, or assemble a dataset. As we program, this may be useful to add results that we are collecting or calculating.

An atomic vector is the simplest R data

type and is a linear vector of a single type. Above, we saw 2

of the 6 main atomic vector types that R uses:

"character" and "numeric" (or

"double"). These are the basic building blocks that all R

objects are built from. The other 4 atomic vector types

are:

-

"logical"forTRUEandFALSE(the boolean data type) -

"integer"for integer numbers (e.g.,2L, theLindicates to R that it’s an integer) -

"complex"to represent complex numbers with real and imaginary parts (e.g.,1 + 4i) and that’s all we’re going to say about them -

"raw"for bitstreams that we won’t discuss further

You can check the type of your vector using the typeof()

function and inputting your vector as the argument.

Vectors are one of the many data structures that R

uses. Other important ones are lists (list), matrices

(matrix), data frames (data.frame), factors

(factor) and arrays (array).

R implicitly converts them to all be the same type.

Vectors can be of only one data type. R tries to convert (coerce) the content of this vector to find a “common denominator” that doesn’t lose any information.

Only one. There is no memory of past data types, and the coercion

happens the first time the vector is evaluated. Therefore, the

TRUE in num_logical gets converted into a

1 before it gets converted into "1" in

combined_logical.

Exercise(continued)

You’ve probably noticed that objects of different types get converted into a single, shared type within a vector. In R, we call converting objects from one class into another class coercion. These conversions happen according to a hierarchy, whereby some types get preferentially coerced into other types. Can you draw a diagram that represents the hierarchy of how these data types are coerced?

Subsetting vectors

Subsetting (sometimes referred to as extracting or indexing) involves accessing out one or more values based on their numeric placement or “index” within a vector. If we want to subset one or several values from a vector, we must provide one index or several indices in square brackets. For instance:

R

respondent_wall_type <- c("muddaub", "burntbricks", "sunbricks")

respondent_wall_type[2]

OUTPUT

[1] "burntbricks"R

respondent_wall_type[c(3, 2)]

OUTPUT

[1] "sunbricks" "burntbricks"We can also repeat the indices to create an object with more elements than the original one:

R

more_respondent_wall_type <- respondent_wall_type[c(1, 2, 3, 2, 1, 3)]

more_respondent_wall_type

OUTPUT

[1] "muddaub" "burntbricks" "sunbricks" "burntbricks" "muddaub"

[6] "sunbricks" R indices start at 1. Programming languages like Fortran, MATLAB, Julia, and R start counting at 1, because that’s what human beings typically do. Languages in the C family (including C++, Java, Perl, and Python) count from 0 because that’s simpler for computers to do.

Conditional subsetting

Another common way of subsetting is by using a logical vector.

TRUE will select the element with the same index, while

FALSE will not:

R

hh_members <- c(3, 7, 10, 6)

hh_members[c(TRUE, FALSE, TRUE, TRUE)]

OUTPUT

[1] 3 10 6Typically, these logical vectors are not typed by hand, but are the output of other functions or logical tests. For instance, if you wanted to select only the values above 5:

R

hh_members > 5 # will return logicals with TRUE for the indices that meet the condition

OUTPUT

[1] FALSE TRUE TRUE TRUER

## so we can use this to select only the values above 5

hh_members[hh_members > 5]

OUTPUT

[1] 7 10 6You can combine multiple tests using & (both

conditions are true, AND) or | (at least one of the

conditions is true, OR):

R

hh_members[hh_members < 4 | hh_members > 7]

OUTPUT

[1] 3 10R

hh_members[hh_members >= 4 & hh_members <= 7]

OUTPUT

[1] 7 6Here, < stands for “less than”, > for

“greater than”, >= for “greater than or equal to”, and

== for “equal to”. The double equal sign == is

a test for numerical equality between the left and right hand sides, and

should not be confused with the single = sign, which

performs variable assignment (similar to <-).

A common task is to search for certain strings in a vector. One could

use the “or” operator | to test for equality to multiple

values, but this can quickly become tedious.

R

possessions <- c("car", "bicycle", "radio", "television", "mobile_phone")

possessions[possessions == "car" | possessions == "bicycle"] # returns both car and bicycle

OUTPUT

[1] "car" "bicycle"The function %in% allows you to test if any of the

elements of a search vector (on the left hand side) are found in the

target vector (on the right hand side):

R

possessions %in% c("car", "bicycle")

OUTPUT

[1] TRUE TRUE FALSE FALSE FALSENote that the output is the same length as the search vector on the

left hand side, because %in% checks whether each element of

the search vector is found somewhere in the target vector. Thus, you can

use %in% to select the elements in the search vector that

appear in your target vector:

R

possessions %in% c("car", "bicycle", "motorcycle", "truck", "boat", "bus")

OUTPUT

[1] TRUE TRUE FALSE FALSE FALSER

possessions[possessions %in% c("car", "bicycle", "motorcycle", "truck", "boat", "bus")]

OUTPUT

[1] "car" "bicycle"Missing data

As R was designed to analyze datasets, it includes the concept of

missing data (which is uncommon in other programming languages). Missing

data are represented in vectors as NA.

When doing operations on numbers, most functions will return

NA if the data you are working with include missing values.

This feature makes it harder to overlook the cases where you are dealing

with missing data. You can add the argument na.rm=TRUE to

calculate the result while ignoring the missing values.

R

rooms <- c(2, 1, 1, NA, 7)

mean(rooms)

OUTPUT

[1] NAR

max(rooms)

OUTPUT

[1] NAR

mean(rooms, na.rm = TRUE)

OUTPUT

[1] 2.75R

max(rooms, na.rm = TRUE)

OUTPUT

[1] 7If your data include missing values, you may want to become familiar

with the functions is.na(), na.omit(), and

complete.cases(). See below for examples.

R

## Extract those elements which are not missing values.

## The ! character is also called the NOT operator

rooms[!is.na(rooms)]

OUTPUT

[1] 2 1 1 7R

## Count the number of missing values.

## The output of is.na() is a logical vector (TRUE/FALSE equivalent to 1/0) so the sum() function here is effectively counting

sum(is.na(rooms))

OUTPUT

[1] 1R

## Returns the object with incomplete cases removed. The returned object is an atomic vector of type `"numeric"` (or `"double"`).

na.omit(rooms)

OUTPUT

[1] 2 1 1 7

attr(,"na.action")

[1] 4

attr(,"class")

[1] "omit"R

## Extract those elements which are complete cases. The returned object is an atomic vector of type `"numeric"` (or `"double"`).

rooms[complete.cases(rooms)]

OUTPUT

[1] 2 1 1 7Recall that you can use the typeof() function to find

the type of your atomic vector.

R

rooms <- c(1, 2, 1, 1, NA, 3, 1, 3, 2, 1, 1, 8, 3, 1, NA, 1)

rooms_no_na <- rooms[!is.na(rooms)]

# or

rooms_no_na <- na.omit(rooms)

# 2.

median(rooms, na.rm = TRUE)

OUTPUT

[1] 1R

# 3.

rooms_above_2 <- rooms_no_na[rooms_no_na > 2]

length(rooms_above_2)

OUTPUT

[1] 4Now that we have learned how to write scripts, and the basics of R’s data structures, we are ready to start working with the SAFI dataset we have been using in the other lessons, and learn about data frames.

Content from Starting with Data

Last updated on 2024-05-14 | Edit this page

Estimated time: 80 minutes

Overview

Questions

- What is a data.frame?

- How can I read a complete csv file into R?

- How can I get basic summary information about my dataset?

- How can I change the way R treats strings in my dataset?

- Why would I want strings to be treated differently?

- How are dates represented in R and how can I change the format?

Objectives

- Describe what a data frame is.

- Load external data from a .csv file into a data frame.

- Summarize the contents of a data frame.

- Subset values from data frames.

- Describe the difference between a factor and a string.

- Convert between strings and factors.

- Reorder and rename factors.

- Change how character strings are handled in a data frame.

- Examine and change date formats.

What are data frames and tibbles?

Data frames are the de facto data structure for tabular data

in R, and what we use for data processing, statistics, and

plotting.

A data frame is the representation of data in the format of a table where the columns are vectors that all have the same length. Data frames are analogous to the more familiar spreadsheet in programs such as Excel, with one key difference. Because columns are vectors, each column must contain a single type of data (e.g., characters, integers, factors). For example, here is a figure depicting a data frame comprising a numeric, a character, and a logical vector.

Data frames can be created by hand, but most commonly they are

generated by the functions read_csv() or

read_table(); in other words, when importing spreadsheets

from your hard drive (or the web). We will now demonstrate how to import

tabular data using read_csv().

Presentation of the SAFI Data

SAFI (Studying African Farmer-Led Irrigation) is a study looking at farming and irrigation methods in Tanzania and Mozambique. The survey data was collected through interviews conducted between November 2016 and June 2017. For this lesson, we will be using a subset of the available data. For information about the full teaching dataset used in other lessons in this workshop, see the dataset description.

We will be using a subset of the cleaned version of the dataset that

was produced through cleaning in OpenRefine

(data/SAFI_clean.csv). In this dataset, the missing data is

encoded as “NULL”, each row holds information for a single interview

respondent, and the columns represent:

| column_name | description |

|---|---|

| key_id | Added to provide a unique Id for each observation. (The InstanceID field does this as well but it is not as convenient to use) |

| village | Village name |

| interview_date | Date of interview |

| no_membrs | How many members in the household? |

| years_liv | How many years have you been living in this village or neighboring village? |

| respondent_wall_type | What type of walls does their house have (from list) |

| rooms | How many rooms in the main house are used for sleeping? |

| memb_assoc | Are you a member of an irrigation association? |

| affect_conflicts | Have you been affected by conflicts with other irrigators in the area? |

| liv_count | Number of livestock owned. |

| items_owned | Which of the following items are owned by the household? (list) |

| no_meals | How many meals do people in your household normally eat in a day? |

| months_lack_food | Indicate which months, In the last 12 months have you faced a situation when you did not have enough food to feed the household? |

| instanceID | Unique identifier for the form data submission |

Importing data

You are going to load the data in R’s memory using the function

read_csv() from the readr

package, which is part of the tidyverse;

learn more about the tidyverse collection

of packages here.

readr gets installed as part as the

tidyverse installation. When you load the

tidyverse

(library(tidyverse)), the core packages (the packages used

in most data analyses) get loaded, including

readr.

Before proceeding, however, this is a good opportunity to talk about

conflicts. Certain packages we load can end up introducing function

names that are already in use by pre-loaded R packages. For instance,

when we load the tidyverse package below, we will introduce two

conflicting functions: filter() and lag().

This happens because filter and lag are

already functions used by the stats package (already pre-loaded in R).

What will happen now is that if we, for example, call the

filter() function, R will use the

dplyr::filter() version and not the

stats::filter() one. This happens because, if conflicted,

by default R uses the function from the most recently loaded package.

Conflicted functions may cause you some trouble in the future, so it is

important that we are aware of them so that we can properly handle them,

if we want.

To do so, we just need the following functions from the conflicted package:

-

conflicted::conflict_scout(): Shows us any conflicted functions.

-

conflict_prefer("function", "package_prefered"): Allows us to choose the default function we want from now on.

It is also important to know that we can, at any time, just call the

function directly from the package we want, such as

stats::filter().

Even with the use of an RStudio project, it can be difficult to learn

how to specify paths to file locations. Enter the here

package! The here package creates paths relative to the top-level

directory (your RStudio project). These relative paths work

regardless of where the associated source file lives inside

your project, like analysis projects with data and reports in different

subdirectories. This is an important contrast to using

setwd(), which depends on the way you order your files on

your computer.

Before we can use the read_csv() and here()

functions, we need to load the tidyverse and here packages.

Also, if you recall, the missing data is encoded as “NULL” in the

dataset. We’ll tell it to the function, so R will automatically convert

all the “NULL” entries in the dataset into NA.

R

library(tidyverse)

library(here)

interviews <- read_csv(

here("data", "SAFI_clean.csv"),

na = "NULL")

In the above code, we notice the here() function takes

folder and file names as inputs (e.g., "data",

"SAFI_clean.csv"), each enclosed in quotations

("") and separated by a comma. The here() will

accept as many names as are necessary to navigate to a particular file

(e.g.,

here("analysis", "data", "surveys", "clean", "SAFI_clean.csv)).

The here() function can accept the folder and file names

in an alternate format, using a slash (“/”) rather than commas to

separate the names. The two methods are equivalent, so that

here("data", "SAFI_clean.csv") and

here("data/SAFI_clean.csv") produce the same result. (The

slash is used on all operating systems; backslashes are not used.)

If you were to type in the code above, it is likely that the

read.csv() function would appear in the automatically

populated list of functions. This function is different from the

read_csv() function, as it is included in the “base”

packages that come pre-installed with R. Overall,

read.csv() behaves similar to read_csv(), with

a few notable differences. First, read.csv() coerces column

names with spaces and/or special characters to different names

(e.g. interview date becomes interview.date).

Second, read.csv() stores data as a

data.frame, where read_csv() stores data as a

tibble. We prefer tibbles because they have nice printing

properties among other desirable qualities. Read more about tibbles here.

The second statement in the code above creates a data frame but

doesn’t output any data because, as you might recall, assignments

(<-) don’t display anything. (Note, however, that

read_csv may show informational text about the data frame

that is created.) If we want to check that our data has been loaded, we

can see the contents of the data frame by typing its name:

interviews in the console.

R

interviews

## Try also

## view(interviews)

## head(interviews)

OUTPUT

# A tibble: 131 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 121 more rows

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>Note

read_csv() assumes that fields are delimited by commas.

However, in several countries, the comma is used as a decimal separator

and the semicolon (;) is used as a field delimiter. If you want to read

in this type of files in R, you can use the read_csv2

function. It behaves exactly like read_csv but uses

different parameters for the decimal and the field separators. If you

are working with another format, they can be both specified by the user.

Check out the help for read_csv() by typing

?read_csv to learn more. There is also the

read_tsv() for tab-separated data files, and

read_delim() allows you to specify more details about the

structure of your file.

Note that read_csv() actually loads the data as a

tibble. A tibble is an extension of R data frames used by

the tidyverse. When the data is read using

read_csv(), it is stored in an object of class

tbl_df, tbl, and data.frame. You

can see the class of an object with

R

class(interviews)

OUTPUT

[1] "spec_tbl_df" "tbl_df" "tbl" "data.frame" As a tibble, the type of data included in each column is

listed in an abbreviated fashion below the column names. For instance,

here key_ID is a column of floating point numbers

(abbreviated <dbl> for the word ‘double’),

village is a column of characters

(<chr>) and the interview_date is a

column in the “date and time” format (<dttm>).

Inspecting data frames

When calling a tbl_df object (like

interviews here), there is already a lot of information

about our data frame being displayed such as the number of rows, the

number of columns, the names of the columns, and as we just saw the

class of data stored in each column. However, there are functions to

extract this information from data frames. Here is a non-exhaustive list

of some of these functions. Let’s try them out!

Size:

-

dim(interviews)- returns a vector with the number of rows as the first element, and the number of columns as the second element (the dimensions of the object) -

nrow(interviews)- returns the number of rows -

ncol(interviews)- returns the number of columns

Content:

-

head(interviews)- shows the first 6 rows -

tail(interviews)- shows the last 6 rows

Names:

-

names(interviews)- returns the column names (synonym ofcolnames()fordata.frameobjects)

Summary:

-

str(interviews)- structure of the object and information about the class, length and content of each column -

summary(interviews)- summary statistics for each column -

glimpse(interviews)- returns the number of columns and rows of the tibble, the names and class of each column, and previews as many values will fit on the screen. Unlike the other inspecting functions listed above,glimpse()is not a “base R” function so you need to have thedplyrortibblepackages loaded to be able to execute it.

Note: most of these functions are “generic.” They can be used on other types of objects besides data frames or tibbles.

Subsetting data frames

Our interviews data frame has rows and columns (it has 2

dimensions). In practice, we may not need the entire data frame; for

instance, we may only be interested in a subset of the observations (the

rows) or a particular set of variables (the columns). If we want to

access some specific data from it, we need to specify the “coordinates”

(i.e., indices) we want from it. Row numbers come first, followed by

column numbers.

Tip

Subsetting a tibble with [ always results

in a tibble. However, note this is not true in general for

data frames, so be careful! Different ways of specifying these

coordinates can lead to results with different classes. This is covered

in the Software Carpentry lesson R for

Reproducible Scientific Analysis.

R

## first element in the first column of the tibble

interviews[1, 1]

OUTPUT

# A tibble: 1 × 1

key_ID

<dbl>

1 1R

## first element in the 6th column of the tibble

interviews[1, 6]

OUTPUT

# A tibble: 1 × 1

respondent_wall_type

<chr>

1 muddaub R

## first column of the tibble (as a vector)

interviews[[1]]

OUTPUT

[1] 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

[19] 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36

[37] 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54

[55] 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 127

[73] 133 152 153 155 178 177 180 181 182 186 187 195 196 197 198 201 202 72

[91] 73 76 83 85 89 101 103 102 78 80 104 105 106 109 110 113 118 125

[109] 119 115 108 116 117 144 143 150 159 160 165 166 167 174 175 189 191 192

[127] 126 193 194 199 200R

## first column of the tibble

interviews[1]

OUTPUT

# A tibble: 131 × 1

key_ID

<dbl>

1 1

2 2

3 3

4 4

5 5

6 6

7 7

8 8

9 9

10 10

# ℹ 121 more rowsR

## first three elements in the 7th column of the tibble

interviews[1:3, 7]

OUTPUT

# A tibble: 3 × 1

rooms

<dbl>

1 1

2 1

3 1R

## the 3rd row of the tibble

interviews[3, ]

OUTPUT

# A tibble: 1 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 3 God 2016-11-17 00:00:00 10 15 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>R

## equivalent to head_interviews <- head(interviews)

head_interviews <- interviews[1:6, ]

: is a special function that creates numeric vectors of

integers in increasing or decreasing order, test 1:10 and

10:1 for instance.

You can also exclude certain indices of a data frame using the

“-” sign:

R

interviews[, -1] # The whole tibble, except the first column

OUTPUT

# A tibble: 131 × 13

village interview_date no_membrs years_liv respondent_wall_type rooms

<chr> <dttm> <dbl> <dbl> <chr> <dbl>

1 God 2016-11-17 00:00:00 3 4 muddaub 1

2 God 2016-11-17 00:00:00 7 9 muddaub 1

3 God 2016-11-17 00:00:00 10 15 burntbricks 1

4 God 2016-11-17 00:00:00 7 6 burntbricks 1

5 God 2016-11-17 00:00:00 7 40 burntbricks 1

6 God 2016-11-17 00:00:00 3 3 muddaub 1

7 God 2016-11-17 00:00:00 6 38 muddaub 1

8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks 3

9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks 1

10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks 5

# ℹ 121 more rows

# ℹ 7 more variables: memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>R

interviews[-c(7:131), ] # Equivalent to head(interviews)

OUTPUT

# A tibble: 6 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>tibbles can be subset by calling indices (as shown

previously), but also by calling their column names directly:

R

interviews["village"] # Result is a tibble

interviews[, "village"] # Result is a tibble

interviews[["village"]] # Result is a vector

interviews$village # Result is a vector

In RStudio, you can use the autocompletion feature to get the full and correct names of the columns.

Exercise

- Create a tibble (

interviews_100) containing only the data in row 100 of theinterviewsdataset.

Now, continue using interviews for each of the following

activities:

- Notice how

nrow()gave you the number of rows in the tibble?

- Use that number to pull out just that last row in the tibble.

- Compare that with what you see as the last row using

tail()to make sure it’s meeting expectations. - Pull out that last row using

nrow()instead of the row number. - Create a new tibble (

interviews_last) from that last row.

Using the number of rows in the interviews dataset that you found in question 2, extract the row that is in the middle of the dataset. Store the content of this middle row in an object named

interviews_middle. (hint: This dataset has an odd number of rows, so finding the middle is a bit trickier than dividing n_rows by 2. Use the median( ) function and what you’ve learned about sequences in R to extract the middle row!Combine

nrow()with the-notation above to reproduce the behavior ofhead(interviews), keeping just the first through 6th rows of the interviews dataset.

R

## 1.

interviews_100 <- interviews[100, ]

## 2.

# Saving `n_rows` to improve readability and reduce duplication

n_rows <- nrow(interviews)

interviews_last <- interviews[n_rows, ]

## 3.

interviews_middle <- interviews[median(1:n_rows), ]

## 4.

interviews_head <- interviews[-(7:n_rows), ]

Factors

R has a special data class, called factor, to deal with categorical data that you may encounter when creating plots or doing statistical analyses. Factors are very useful and actually contribute to making R particularly well suited to working with data. So we are going to spend a little time introducing them.

Factors represent categorical data. They are stored as integers

associated with labels and they can be ordered (ordinal) or unordered

(nominal). Factors create a structured relation between the different

levels (values) of a categorical variable, such as days of the week or

responses to a question in a survey. This can make it easier to see how

one element relates to the other elements in a column. While factors

look (and often behave) like character vectors, they are actually

treated as integer vectors by R. So you need to be very

careful when treating them as strings.

Once created, factors can only contain a pre-defined set of values, known as levels. By default, R always sorts levels in alphabetical order. For instance, if you have a factor with 2 levels:

R

respondent_floor_type <- factor(c("earth", "cement", "cement", "earth"))

R will assign 1 to the level "cement" and

2 to the level "earth" (because c

comes before e, even though the first element in this

vector is "earth"). You can see this by using the function

levels() and you can find the number of levels using

nlevels():

R

levels(respondent_floor_type)

OUTPUT

[1] "cement" "earth" R

nlevels(respondent_floor_type)

OUTPUT

[1] 2Sometimes, the order of the factors does not matter. Other times you

might want to specify the order because it is meaningful (e.g., “low”,

“medium”, “high”). It may improve your visualization, or it may be

required by a particular type of analysis. Here, one way to reorder our

levels in the respondent_floor_type vector would be:

R

respondent_floor_type # current order

OUTPUT

[1] earth cement cement earth

Levels: cement earthR

respondent_floor_type <- factor(respondent_floor_type,

levels = c("earth", "cement"))

respondent_floor_type # after re-ordering

OUTPUT

[1] earth cement cement earth

Levels: earth cementIn R’s memory, these factors are represented by integers (1, 2), but

are more informative than integers because factors are self describing:

"cement", "earth" is more descriptive than

1, and 2. Which one is “earth”? You wouldn’t

be able to tell just from the integer data. Factors, on the other hand,

have this information built in. It is particularly helpful when there

are many levels. It also makes renaming levels easier. Let’s say we made

a mistake and need to recode “cement” to “brick”. We can do this using

the fct_recode() function from the

forcats package (included in the

tidyverse) which provides some extra tools

to work with factors.

R

levels(respondent_floor_type)

OUTPUT

[1] "earth" "cement"R

respondent_floor_type <- fct_recode(respondent_floor_type, brick = "cement")

## as an alternative, we could change the "cement" level directly using the

## levels() function, but we have to remember that "cement" is the second level

# levels(respondent_floor_type)[2] <- "brick"

levels(respondent_floor_type)

OUTPUT

[1] "earth" "brick"R

respondent_floor_type

OUTPUT

[1] earth brick brick earth

Levels: earth brickSo far, your factor is unordered, like a nominal variable. R does not

know the difference between a nominal and an ordinal variable. You make

your factor an ordered factor by using the ordered=TRUE

option inside your factor function. Note how the reported levels changed

from the unordered factor above to the ordered version below. Ordered

levels use the less than sign < to denote level

ranking.

R

respondent_floor_type_ordered <- factor(respondent_floor_type,

ordered = TRUE)

respondent_floor_type_ordered # after setting as ordered factor

OUTPUT

[1] earth brick brick earth

Levels: earth < brickConverting factors

If you need to convert a factor to a character vector, you use

as.character(x).

R

as.character(respondent_floor_type)

OUTPUT

[1] "earth" "brick" "brick" "earth"Converting factors where the levels appear as numbers (such as

concentration levels, or years) to a numeric vector is a little

trickier. The as.numeric() function returns the index

values of the factor, not its levels, so it will result in an entirely

new (and unwanted in this case) set of numbers. One method to avoid this

is to convert factors to characters, and then to numbers. Another method

is to use the levels() function. Compare:

R

year_fct <- factor(c(1990, 1983, 1977, 1998, 1990))

as.numeric(year_fct) # Wrong! And there is no warning...

OUTPUT

[1] 3 2 1 4 3R

as.numeric(as.character(year_fct)) # Works...

OUTPUT

[1] 1990 1983 1977 1998 1990R

as.numeric(levels(year_fct))[year_fct] # The recommended way.

OUTPUT

[1] 1990 1983 1977 1998 1990Notice that in the recommended levels() approach, three

important steps occur:

- We obtain all the factor levels using

levels(year_fct) - We convert these levels to numeric values using

as.numeric(levels(year_fct)) - We then access these numeric values using the underlying integers of

the vector

year_fctinside the square brackets

Renaming factors

When your data is stored as a factor, you can use the

plot() function to get a quick glance at the number of

observations represented by each factor level. Let’s extract the

memb_assoc column from our data frame, convert it into a

factor, and use it to look at the number of interview respondents who

were or were not members of an irrigation association:

R

## create a vector from the data frame column "memb_assoc"

memb_assoc <- interviews$memb_assoc

## convert it into a factor

memb_assoc <- as.factor(memb_assoc)

## let's see what it looks like

memb_assoc

OUTPUT

[1] <NA> yes <NA> <NA> <NA> <NA> no yes no no <NA> yes no <NA> yes

[16] <NA> <NA> <NA> <NA> <NA> no <NA> <NA> no no no <NA> no yes <NA>

[31] <NA> yes no yes yes yes <NA> yes <NA> yes <NA> no no <NA> no

[46] no yes <NA> <NA> yes <NA> no yes no <NA> yes no no <NA> no

[61] yes <NA> <NA> <NA> no yes no no no no yes <NA> no yes <NA>

[76] <NA> yes no no yes no no yes no yes no no <NA> yes yes

[91] yes yes yes no no no no yes no no yes yes no <NA> no

[106] no <NA> no no <NA> no <NA> <NA> no no no no yes no no

[121] no no no no no no no no no yes <NA>

Levels: no yesR

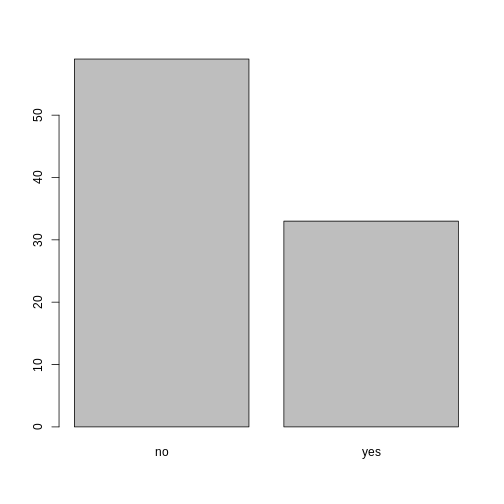

## bar plot of the number of interview respondents who were

## members of irrigation association:

plot(memb_assoc)

Looking at the plot compared to the output of the vector, we can see that in addition to “no”s and “yes”s, there are some respondents for which the information about whether they were part of an irrigation association hasn’t been recorded, and encoded as missing data. They do not appear on the plot. Let’s encode them differently so they can counted and visualized in our plot.

R

## Let's recreate the vector from the data frame column "memb_assoc"

memb_assoc <- interviews$memb_assoc

## replace the missing data with "undetermined"

memb_assoc[is.na(memb_assoc)] <- "undetermined"

## convert it into a factor

memb_assoc <- as.factor(memb_assoc)

## let's see what it looks like

memb_assoc

OUTPUT

[1] undetermined yes undetermined undetermined undetermined

[6] undetermined no yes no no

[11] undetermined yes no undetermined yes

[16] undetermined undetermined undetermined undetermined undetermined

[21] no undetermined undetermined no no

[26] no undetermined no yes undetermined

[31] undetermined yes no yes yes

[36] yes undetermined yes undetermined yes

[41] undetermined no no undetermined no

[46] no yes undetermined undetermined yes

[51] undetermined no yes no undetermined

[56] yes no no undetermined no

[61] yes undetermined undetermined undetermined no

[66] yes no no no no

[71] yes undetermined no yes undetermined

[76] undetermined yes no no yes

[81] no no yes no yes

[86] no no undetermined yes yes

[91] yes yes yes no no

[96] no no yes no no

[101] yes yes no undetermined no

[106] no undetermined no no undetermined

[111] no undetermined undetermined no no

[116] no no yes no no

[121] no no no no no

[126] no no no no yes

[131] undetermined

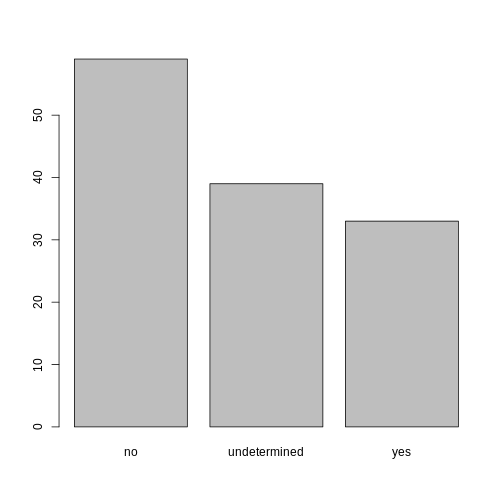

Levels: no undetermined yesR

## bar plot of the number of interview respondents who were

## members of irrigation association:

plot(memb_assoc)

R

## Rename levels.

memb_assoc <- fct_recode(memb_assoc, No = "no",

Undetermined = "undetermined", Yes = "yes")

## Reorder levels. Note we need to use the new level names.

memb_assoc <- factor(memb_assoc, levels = c("No", "Yes", "Undetermined"))

plot(memb_assoc)

Formatting Dates

One of the most common issues that new (and experienced!) R users

have is converting date and time information into a variable that is

appropriate and usable during analyses. A best practice for dealing with

date data is to ensure that each component of your date is available as

a separate variable. In our dataset, we have a column

interview_date which contains information about the year,

month, and day that the interview was conducted. Let’s convert those

dates into three separate columns.

R

str(interviews)

We are going to use the package

lubridate, which is included in the

tidyverse installation but not loaded by

default, so we have to load it explicitly with

library(lubridate).

Start by loading the required package:

R

library(lubridate)

The lubridate function ymd() takes a vector representing

year, month, and day, and converts it to a Date vector.

Date is a class of data recognized by R as being a date and

can be manipulated as such. The argument that the function requires is

flexible, but, as a best practice, is a character vector formatted as

“YYYY-MM-DD”.

Let’s extract our interview_date column and inspect the

structure:

R

dates <- interviews$interview_date

str(dates)

OUTPUT

POSIXct[1:131], format: "2016-11-17" "2016-11-17" "2016-11-17" "2016-11-17" "2016-11-17" ...When we imported the data in R, read_csv() recognized

that this column contained date information. We can now use the

day(), month() and year()

functions to extract this information from the date, and create new

columns in our data frame to store it:

R

interviews$day <- day(dates)

interviews$month <- month(dates)

interviews$year <- year(dates)

interviews

OUTPUT

# A tibble: 131 × 17

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 1 God 2016-11-17 00:00:00 3 4 muddaub

2 2 God 2016-11-17 00:00:00 7 9 muddaub

3 3 God 2016-11-17 00:00:00 10 15 burntbricks

4 4 God 2016-11-17 00:00:00 7 6 burntbricks

5 5 God 2016-11-17 00:00:00 7 40 burntbricks

6 6 God 2016-11-17 00:00:00 3 3 muddaub

7 7 God 2016-11-17 00:00:00 6 38 muddaub

8 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

9 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

10 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

# ℹ 121 more rows

# ℹ 11 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>, day <int>, month <dbl>, year <dbl>Notice the three new columns at the end of our data frame.

In our example above, the interview_date column was read

in correctly as a Date variable but generally that is not

the case. Date columns are often read in as character

variables and one can use the as_date() function to convert

them to the appropriate Date/POSIXctformat.

Let’s say we have a vector of dates in character format:

R

char_dates <- c("7/31/2012", "8/9/2014", "4/30/2016")

str(char_dates)

OUTPUT

chr [1:3] "7/31/2012" "8/9/2014" "4/30/2016"We can convert this vector to dates as :

R

as_date(char_dates, format = "%m/%d/%Y")

OUTPUT

[1] "2012-07-31" "2014-08-09" "2016-04-30"Argument format tells the function the order to parse

the characters and identify the month, day and year. The format above is

the equivalent of mm/dd/yyyy. A wrong format can lead to parsing errors

or incorrect results.

For example, observe what happens when we use a lower case y instead of upper case Y for the year.

R

as_date(char_dates, format = "%m/%d/%y")

WARNING

Warning: 3 failed to parse.OUTPUT

[1] NA NA NAHere, the %y part of the format stands for a two-digit

year instead of a four-digit year, and this leads to parsing errors.

Or in the following example, observe what happens when the month and day elements of the format are switched.

R

as_date(char_dates, format = "%d/%m/%y")

WARNING

Warning: 3 failed to parse.OUTPUT

[1] NA NA NASince there is no month numbered 30 or 31, the first and third dates cannot be parsed.

We can also use functions ymd(), mdy() or

dmy() to convert character variables to date.

R

mdy(char_dates)

OUTPUT

[1] "2012-07-31" "2014-08-09" "2016-04-30"Content from Data Wrangling with dplyr

Last updated on 2024-05-14 | Edit this page

Estimated time: 40 minutes

Overview

Questions

- How can I select specific rows and/or columns from a dataframe?

- How can I combine multiple commands into a single command?

- How can I create new columns or remove existing columns from a dataframe?

Objectives

- Describe the purpose of an R package and the

dplyrpackage. - Select certain columns in a dataframe with the

dplyrfunctionselect. - Select certain rows in a dataframe according to filtering conditions

with the

dplyrfunctionfilter. - Link the output of one

dplyrfunction to the input of another function with the ‘pipe’ operator%>%. - Add new columns to a dataframe that are functions of existing

columns with

mutate. - Use the split-apply-combine concept for data analysis.

- Use

summarize,group_by, andcountto split a dataframe into groups of observations, apply a summary statistics for each group, and then combine the results.

dplyr is a package for making tabular

data wrangling easier by using a limited set of functions that can be

combined to extract and summarize insights from your data.

Like readr,

dplyr is a part of the tidyverse. These

packages were loaded in R’s memory when we called

library(tidyverse) earlier.

Note

The packages in the tidyverse, namely

dplyr, tidyr

and ggplot2 accept both the British

(e.g. summarise) and American (e.g. summarize)

spelling variants of different function and option names. For this

lesson, we utilize the American spellings of different functions;

however, feel free to use the regional variant for where you are

teaching.

What is an R package?

The package dplyr provides easy tools

for the most common data wrangling tasks. It is built to work directly

with dataframes, with many common tasks optimized by being written in a

compiled language (C++) (not all R packages are written in R!).

There are also packages available for a wide range of tasks including

building plots (ggplot2, which we’ll see

later), downloading data from the NCBI database, or performing

statistical analysis on your data set. Many packages such as these are

housed on, and downloadable from, the Comprehensive

R Archive Network

(CRAN) using install.packages. This function makes the

package accessible by your R installation with the command

library(), as you did with tidyverse

earlier.

To easily access the documentation for a package within R or RStudio,

use help(package = "package_name").

To learn more about dplyr after the

workshop, you may want to check out this handy

data transformation with dplyr

cheatsheet.

Note

There are alternatives to the tidyverse packages for

data wrangling, including the package data.table.

See this comparison

for example to get a sense of the differences between using

base, tidyverse, and

data.table.

Learning dplyr

To make sure everyone will use the same dataset for this lesson, we’ll read again the SAFI dataset that we downloaded earlier.

R

## load the tidyverse

library(tidyverse)

library(here)

interviews <- read_csv(here("data", "SAFI_clean.csv"), na = "NULL")

## inspect the data

interviews

## preview the data

# view(interviews)

We’re going to learn some of the most common

dplyr functions:

-

select(): subset columns -

filter(): subset rows on conditions -

mutate(): create new columns by using information from other columns -

group_by()andsummarize(): create summary statistics on grouped data -

arrange(): sort results -

count(): count discrete values

Selecting columns and filtering rows

To select columns of a dataframe, use select(). The

first argument to this function is the dataframe

(interviews), and the subsequent arguments are the columns

to keep, separated by commas. Alternatively, if you are selecting

columns adjacent to each other, you can use a : to select a

range of columns, read as “select columns from ___ to ___.” You may have

done something similar in the past using subsetting.

select() is essentially doing the same thing as subsetting,

using a package (dplyr) instead of R’s base functions.

R

# to select columns throughout the dataframe

select(interviews, village, no_membrs, months_lack_food)

# to do the same thing with subsetting

interviews[c("village","no_membrs","months_lack_food")]

# to select a series of connected columns

select(interviews, village:respondent_wall_type)

To choose rows based on specific criteria, we can use the

filter() function. The argument after the dataframe is the

condition we want our final dataframe to adhere to (e.g. village name is

Chirodzo):

R

# filters observations where village name is "Chirodzo"

filter(interviews, village == "Chirodzo")

OUTPUT

# A tibble: 39 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

2 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

3 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

4 34 Chirodzo 2016-11-17 00:00:00 8 18 burntbricks

5 35 Chirodzo 2016-11-17 00:00:00 5 45 muddaub

6 36 Chirodzo 2016-11-17 00:00:00 6 23 sunbricks

7 37 Chirodzo 2016-11-17 00:00:00 3 8 burntbricks

8 43 Chirodzo 2016-11-17 00:00:00 7 29 muddaub

9 44 Chirodzo 2016-11-17 00:00:00 2 6 muddaub

10 45 Chirodzo 2016-11-17 00:00:00 9 7 muddaub

# ℹ 29 more rows

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>We can also specify multiple conditions within the

filter() function. We can combine conditions using either

“and” or “or” statements. In an “and” statement, an observation (row)

must meet every criteria to be included in the

resulting dataframe. To form “and” statements within dplyr, we can pass

our desired conditions as arguments in the filter()

function, separated by commas:

R

# filters observations with "and" operator (comma)

# output dataframe satisfies ALL specified conditions

filter(interviews, village == "Chirodzo",

rooms > 1,

no_meals > 2)

OUTPUT

# A tibble: 10 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

2 49 Chirodzo 2016-11-16 00:00:00 6 26 burntbricks

3 52 Chirodzo 2016-11-16 00:00:00 11 15 burntbricks

4 56 Chirodzo 2016-11-16 00:00:00 12 23 burntbricks

5 65 Chirodzo 2016-11-16 00:00:00 8 20 burntbricks

6 66 Chirodzo 2016-11-16 00:00:00 10 37 burntbricks

7 67 Chirodzo 2016-11-16 00:00:00 5 31 burntbricks

8 68 Chirodzo 2016-11-16 00:00:00 8 52 burntbricks

9 199 Chirodzo 2017-06-04 00:00:00 7 17 burntbricks

10 200 Chirodzo 2017-06-04 00:00:00 8 20 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>We can also form “and” statements with the &

operator instead of commas:

R

# filters observations with "&" logical operator

# output dataframe satisfies ALL specified conditions

filter(interviews, village == "Chirodzo" &

rooms > 1 &

no_meals > 2)

OUTPUT

# A tibble: 10 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

2 49 Chirodzo 2016-11-16 00:00:00 6 26 burntbricks

3 52 Chirodzo 2016-11-16 00:00:00 11 15 burntbricks

4 56 Chirodzo 2016-11-16 00:00:00 12 23 burntbricks

5 65 Chirodzo 2016-11-16 00:00:00 8 20 burntbricks

6 66 Chirodzo 2016-11-16 00:00:00 10 37 burntbricks

7 67 Chirodzo 2016-11-16 00:00:00 5 31 burntbricks

8 68 Chirodzo 2016-11-16 00:00:00 8 52 burntbricks

9 199 Chirodzo 2017-06-04 00:00:00 7 17 burntbricks

10 200 Chirodzo 2017-06-04 00:00:00 8 20 burntbricks

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>In an “or” statement, observations must meet at least one of the specified conditions. To form “or” statements we use the logical operator for “or,” which is the vertical bar (|):

R

# filters observations with "|" logical operator

# output dataframe satisfies AT LEAST ONE of the specified conditions

filter(interviews, village == "Chirodzo" | village == "Ruaca")

OUTPUT

# A tibble: 88 × 14

key_ID village interview_date no_membrs years_liv respondent_wall_type

<dbl> <chr> <dttm> <dbl> <dbl> <chr>

1 8 Chirodzo 2016-11-16 00:00:00 12 70 burntbricks

2 9 Chirodzo 2016-11-16 00:00:00 8 6 burntbricks

3 10 Chirodzo 2016-12-16 00:00:00 12 23 burntbricks

4 23 Ruaca 2016-11-21 00:00:00 10 20 burntbricks

5 24 Ruaca 2016-11-21 00:00:00 6 4 burntbricks

6 25 Ruaca 2016-11-21 00:00:00 11 6 burntbricks

7 26 Ruaca 2016-11-21 00:00:00 3 20 burntbricks

8 27 Ruaca 2016-11-21 00:00:00 7 36 burntbricks

9 28 Ruaca 2016-11-21 00:00:00 2 2 muddaub

10 29 Ruaca 2016-11-21 00:00:00 7 10 burntbricks

# ℹ 78 more rows

# ℹ 8 more variables: rooms <dbl>, memb_assoc <chr>, affect_conflicts <chr>,

# liv_count <dbl>, items_owned <chr>, no_meals <dbl>, months_lack_food <chr>,

# instanceID <chr>Pipes